By The Metric Maven

Bulldog Edition

Some years back I became involved in evaluating the results of an experiment that clearly had scientific issues. I assisted two other volunteers, and was mostly there to critique the experimental methods. Early on I asked if the answer was as simple as the data had been cooked. One of the volunteers was a graduate student in mathematics. He looked at me and said “no, it’s fine, I already looked at the data.” I was a bit puzzled and wanted to know why he had such confidence that the data had not been altered. The mathematician said “the data is consistent with Benford’s Law.” I had no idea what that was, and surprised to hear that generally the first significant digit of numerical data is not random. The distribution of 1’s, 2’s, 3’s and so on up to 9 is not uniform. The probability of a one is higher than a two and they all follow a statistical pattern.

My mind had a very difficult time accepting this statement. The mathematician told me to “go look it up” which I did.

The story goes that Frank Benford (1883-1948), while working as an electrical engineer at General Electric, had obtained a well-used copy of a book of logarithms. He noticed that the beginning pages were the most soiled and worn. The idea that people would most often look up logarithms of numbers that begin with number one, and then those with two, and so on up to nine was surprising. Benford wrote up his observation, which is often called Benford’s Law. However Simon Newcomb (1835-1909) had earlier published the same observation in 1881.

I had a hard time accepting this because it meant that the first significant digit of a number is not statistically independent. The mathematical analysis to derive Benford’s law is beyond my expertise.[1] Sven pointed out that Warren Weaver (1874-1978) in his book Lady Luck has a reasonably intuitive explanation of how Benford’s Law comes to be. The relevant section is called The Distribution of Significant Digits, and does not mention Benford directly. Weaver makes this statement: “Although it remained unsuspected or at least unidentified for centuries, this distribution law for first integers is a built-in characteristic of our number system.”

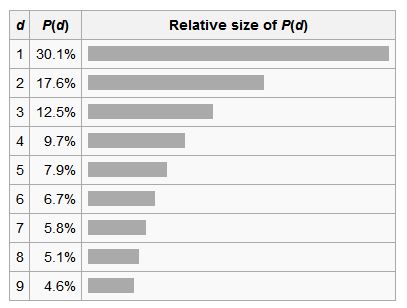

Here is a nice graph from Wikipedia showing the distribution of the first significant digit for numerical data:

Thirty percent of the time, the first significant digit of commonly used physical constants found in an elementary physics textbook is one. Census populations follow Benford’s Law, as do income tax data, one-day returns on the Dow-Jones industrial average and Standard and Poors indexes. Benford’s law is often used in forensic accounting to screen for fraud.

At this point, I want you to note something about this plot of Benford’s Law: what is the probability of zero for the first significant digit? Well, there isn’t one. If you add up all the probabilities you end up with 100%, so no probability is assigned to zero for the first significant digit, or should it be called the first insignificant digit?

I had given thought to discussing significant digits in the past, but there are differing views about how to go about determining significant figures in calculations, and so I tended to shy away from any discussion of the topic. Not until a reader took me to task over a statement I made in a blog about the 100th anniversary of the USMA did I decide it was worth some examination:

Also, the Maven writes: “The world record eyebrow hair is touted as 9 centimeters (90 millimeters for those with a refined measurement sense).”

In this case, since the measurement apparently was not to the nearest 0.1 cm, writing it as 90 millimeters would be false precision. (Of course if it were given as, say 9.2 cm, then 92 mm would be better.)

Thus, centimeters should be considered in such circumstances to avoid any indication of false precision; otherwise, centi-, and deci-, deka, and hecto-, should be considered as sort of “informal prefixes”…

While there is a lot of disagreement about how to determine significant digits, the one statement about them which is generally accepted is that adding zeros on the right side of a whole number does not constitute adding significant digits.

Here is a statement from Learn How To Determine Significant Figures:

If no decimal point is present, the rightmost non-zero digit is the least significant figure. In the number 5800, the least significant figure is ‘8’.

Another university website has:

…Trailing zeros in a whole number with no decimal shown are NOT significant. Writing just “540” indicates that the zero is NOT significant, and there are only TWO significant figures in this value.

Wikipedia has this to say:

The significant figures of a number are digits that carry meaning contributing to its measurement resolution. This includes all digits except:[1]

- All leading zeros;

- Trailing zeros when they are merely placeholders to indicate the scale of the number (exact rules are explained at identifying significant figures)……

Wikipedia in its rules for identifying significant figures states:

In a number without a decimal point, trailing zeros may or may not be significant. More information through additional graphical symbols or explicit information on errors is needed to clarify the significance of trailing zeros.

If a whole number is encountered without any context, the trailing zeros should be assumed as insignificant unless the text specifies otherwise. Clearly when I pointed out that 9 cm would be better written as 90 mm, I did not conjure up an extra significant digit and imply more measurement resolution. The commentator made an unwarranted assumption about the 9 cm value: “since the measurement apparently was not to the nearest 0.1 cm, writing it as 90 millimeters would be false precision.” He is acting as a psychic and divining what precision was implied by the person who measured the value and offered it as 9 cm. What was offered up by the commentator is actually an example of false precision. There is no reason to assume the measurement was or was not to the nearest 0.1 cm or 0.05 cm or 0.025 cm. Only a single integer with a single significant figure of 9 is offered. Adding a zero on the end and expressing it in millimeters without providing any additional information altered that fact not one bit.

In this situation the zero is just a final place holder, therefore when an extra zero is added to the end, it does not introduce any increase in implied precision or become a significant figure. The first significant digit is still nine for 90 mm as it was for 9 cm.

“Trapped zeros” are considered significant. In the case of 402, the zero between the 4 and the 2 is significant, but a trailing zero such as that found on 420 is not. Adding an infinite number of trailing zeros to an integer number does not increase the number of significant digits. When I pointed out that Metric Today should change centimeter values to exclusively millimeter values, I only changed 9 cm to 90 mm. I can equivalently write 9 cm as 90 mm, or 90 000 µm or 90 000 000 nm without introducing any extra “implied precision.” Unless I tell you that 90 mm is a value with two significant figures, you should assume the zero is not significant. The centimeter is a coarse enough measurement length, that when implemented for everyday measure, any useful value will have a decimal point, and is more appropriately written in millimeters.

The “implied precision” argument against using millimeters exclusively in everyday life is one that has an appearance of technical relevance, but is no more than an ad hoc truthiness statement. Everyday it is empirically demonstrated as vacuous by those who construct metric buildings in Australia, Bangladesh, Botswana, Cameroon, India, Kenya, Mauritius, New Zealand, Pakistan, South Africa, United Kingdom, and Zimbabwe. It is also theoretically superficial when examined carefully. Adopting knee-jerk contrarianism mantled in truthiness does not contribute to human understanding, it only attempts to squelch it.

Why is this question worthy of an entire blog? Because we probably get more flak on the millimeter vs centimeter question than any other. And the flak comes from metric advocates. Occasionally, it comes from a metric advocate whom we admire. And yet, the argument for keeping the centimeter hanging around like an albatross is always based on a misunderstanding of precision: the notion that that extra zero has some meaning beyond establishing scale. It doesn’t. Scientists, engineers, and mathematicians are all in accord that it doesn’t. It really isn’t even a metric question, but it’s only metric advocates that aren’t on the same page here. Odd, that.

[1] Hill, Theodore P., “A Statistical Derivation of the Significant-Digit Law” 1996-03-20 Georgia Institute of Technology

If you liked this essay and wish to support the work of The Metric Maven, please visit his Patreon Page and contribute. Also purchase his books about the metric system:

The first book is titled: Our Crumbling Invisible Infrastructure. It is a succinct set of essays that explain why the absence of the metric system in the US is detrimental to our personal heath and our economy. These essays are separately available for free on my website, but the book has them all in one place in print. The book may be purchased from Amazon here.

The second book is titled The Dimensions of the Cosmos. It takes the metric prefixes from yotta to Yocto and uses each metric prefix to describe a metric world. The book has a considerable number of color images to compliment the prose. It has been receiving good reviews. I think would be a great reference for US science teachers. It has a considerable number of scientific factoids and anecdotes that I believe would be of considerable educational use. It is available from Amazon here.

The third book is called Death By A Thousand Cuts, A Secret History of the Metric System in The United States. This monograph explains how we have been unable to legally deal with weights and measures in the United States from George Washington, to our current day. This book is also available on Amazon here.

You’ll probably see the inch go away before the cm. Just be glad that there aren’t 3600 mm and 120 cm and 3 dm in a meter or something crazy like that. Using the other prefixes do offer an opportunity to lose some of those Zeros when present as trailing digits. More to remember? Yes. As confusing as the imperial/usc/or whatever the nomenclature? No. To me: 6 or a half dozen.

Trevor Noah had something to say about the imperial measures vs metric in his “African American” show aired on comedy central 2016-02-27.

meter on

The Maven writes:

The commentator made an unwarranted assumption about the 9 cm value: “since the measurement apparently was not to the nearest 0.1 cm, writing it as 90 millimeters would be false precision.” He is acting as a psychic and divining what precision was implied by the person who measured the value and offered it as 9 cm.

—–

The commentator (me) made the assumption because he used sense, namely that record eyebrow hair reported as 9 cm had no need for more precision.

Perhaps a better example would be a person’s height. How many persons worldwide would give their height to 1-mm precision? For example, my height is (about) 168 cm (1.68 m), but how in the world would I even measure such to 1-mm precision? (Even if I could, such would typically be unnecesary.) [Somewhat related is something I noticed: In some countries heights are given in centimeters (such as 168 cm) while in others such is given in meters (such as 1.68 m). Perhaps the Maven should investigate such and write an essay on his findings (assuming he didn’t already).]

Wrt Benford’s Law, the Maven should have given its probability distribution function algebraically, which is simply

P(d) = log(1 + 1/d) for d = 1,2,3,…,9.

Substituting, be get, to the nearest 0.001, P(1) = log(2/1) = 0.301, P(2) = log(3/2) = 0.176, P(3) = log(4/3) = 0.125, …, P(9) = log(10/9) = 0.046, as in the Maven’s tabular distribution above.

Btw, because of round-off error, such tabular distributions might not sum to one (100%), but such here can be shown sans making such a table:

log(2/1) + log(3/2) + log(4/3) + … + log(10/9)

= log[(2/1)x(3/2)x(4/3)x…x(10/9)]

= log[10/1]

= 1

[QED!]

I believe you completely missed the point of the article. Describing your height as 1680 mm in no way implies mm-level precision in the measurement. The trailing zero is insignificant, meaning it might actually be a 1 or a 4, but wasn’t measured, so don’t worry about it.

Heck, with that measurement it’s entirely possible your height is actually 1675 mm, rounded up. The zero is NOT significant.

If you truly can’t wrap your brain around significant figures, I don’t blame you. It took me a LONG time to accept that 12×12=140. Describe your height as 1.68 m and be done with it.

Thanks for your comments, TL. Wrt 168 cm, such would imply my height x is in the interval 167.5 < x < 168.5, or, 1675 < 10x < 1685 in millimeters.

Thus, using 1680 mm for 168 cm could very well indicate spurious mm-level precision, whether we use the silly word insignificant or not.

Wrt the concept of "significant digit", it is something we could just ignore. Why? Because we could write, as many involved with SI do, to give a value to, say, "the nearest 0.001", which, btw (unrelatedly), I just did on an exam I gave yesterday on elementary probability concepts ["Find each of the following probabilities to the nearest 0.001"].

Also, with respect to the word significant itself, perhaps we should use it when it truly has a meaning of importance, such as a "significant result" instead of wasting time with "significant digits", where something like "nearest 0.1" should suffice.

Significant digits are not a waste of time. I invite you to attempt an error analysis on an experiment without their use.

It’s a relatively simple concept that MUST be understood in order to perform any meaningful science.

TL:

When a person with my background thinks of “error”, we think of things such as precision, as in the not uncommon phrase “margin of sampling error”, where the phrase “significant digits” is not really relevant. [You may have heard about that important position paper by the American Statistical Association earlier this week on P-values. I really don’t see a “significant digit” issue whatsoever with a P-value (although of course do see a well-known use of the word “significant” with such).]

Thus, please give us an example of an error analysis where the concept of significant digits MUST be used instead of using something like “to the nearest 0.001”.

The problem is that the symbol 0 is being used in two different senses. In one sense, it means “not present”; in the other, it means “unknown and/or beneath our concern”. The resulting ambiguity is unfortunate; however, one can dodge it by using scientific notation.